What Statistical Significance Actually Tells Us

‘Statistically significant’ sounds definitive—but it's not the same as large, important, or clinically meaningful. A closer look at what the term actually measures, why it's so often misunderstood, and how to read research claims more carefully.

Why the phrase sounds more definitive than it is

When you hear about a study reporting that taking product X resulted in a statistically significant increase in Y, it can sound conclusive. The math said it worked. Case closed.

But what does statistically significant actually mean?

The phrase is often treated as a synonym for large, important, meaningful, or proven. The word statistical carries authority—as if complex math has already settled the question in a black-and-white way. Many people also assume statistical significance is the same as clinical significance.

In reality, statistical significance has a narrow, technical meaning. The gap between how the term is perceived and what it actually represents creates confusion—and gives marketers plenty of room to oversell findings that may not be very strong at all.

What statistical significance technically means

Statistical significance refers to the probability that an observed result could have occurred by chance, assuming there is no true effect—a framework formalized through hypothesis testing. More specifically, it reflects whether the data are sufficiently inconsistent with the null hypothesis beyond a predefined threshold of uncertainty.

To understand this, consider a simplified study design.

A researcher is investigating a new supplement intended to improve hair growth. They hypothesize that supplement X will increase hair density by at least 20% compared to no treatment. In statistical testing, researchers don’t try to prove their hypothesis true. Instead, they attempt to reject a competing statement: the null hypothesis. In this case, the null hypothesis might be: supplement X does not increase hair density by at least 20% compared to placebo.

Before collecting any data, the researcher defines an acceptable level of uncertainty—commonly 5%. This corresponds to a significance level (alpha) of 0.05. By choosing this threshold, the researcher accepts a 5% risk of falsely rejecting the null hypothesis.

The researcher then conducts a randomized controlled trial: 25 participants receive supplement X and 25 receive a placebo. Hair density is measured before and after 12 weeks. After analyzing the data, the researcher calculates a p-value–the probability of observing a result as extreme as the one measured if the null hypothesis were true. The study might report:

Participants receiving supplement X showed a 28% greater increase in hair density than the placebo group (n = 25 per group, p = 0.03).

Because the p-value is below the predefined threshold of 0.05, the researcher rejects the null hypothesis and concludes that the result is statistically significant.

That’s it. That is all statistical significance tells us.

What statistical significance doesn’t tell us

Statistical significance does not tell us how large an effect is, whether the effect is noticeable or meaningful, whether it’s clinically important, or whether the finding is reliable or likely to replicate.

A p-value is a probability statement, not a measure of impact or usefulness. This is why scientists don’t interpret results based on p-values alone. Study design, measurement quality, sample size, and external validity often matter far more than whether a result crosses an arbitrary threshold.

If you want a faster way to read health studies without oversimplifying them, subscribers get a free guide: How to Read a Health Study in 10 Minutes.

Why statistically significant results can be misleading

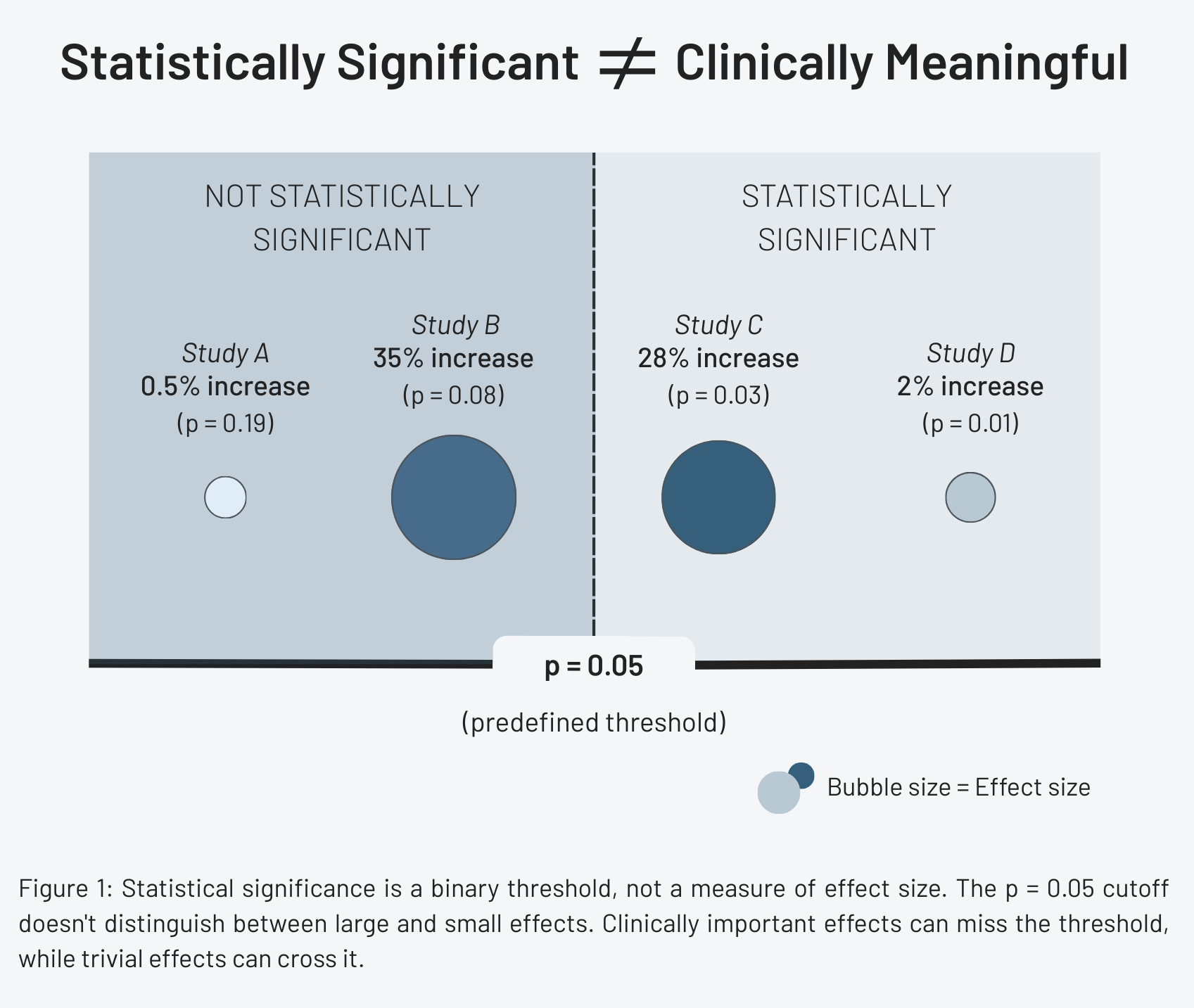

A common assumption is that statistically significant means clinically meaningful. That assumption often fails.

Consider two hair growth studies. Study A finds that supplement X increases hair density by 28%, with p < 0.05. A change of this size would likely be visible and meaningful to users. Study B finds that supplement Y increases hair density by 2% with p < 0.01.

Both results are statistically significant–but only one is practically meaningful. With a large enough sample size, even tiny, trivial effects can reach statistical significance. This is why focusing on p-values alone can exaggerate the importance of findings—especially in headlines, marketing claims, and product labels.

Confidence intervals provide context

While statistical significance answers a narrow probability question, confidence intervals provide far more useful information.

A confidence interval estimates a range of values within which the true effect likely lies. A 95% confidence interval means that if a study were repeated many times, 95% of those intervals would contain the true population value.

Returning to the hair growth example, the study might report:

Supplement X increased hair density by an average of 24 follicles per square centimeter (95% CI: 19.1 to 27.8).

This tells us not only that an effect exists, but also how large it likely is, how precise the estimate is, and whether the effect is plausibly small or meaningfully large. Confidence intervals shift the focus from “is this result statistically significant?” to “how big is the effect, and how confident should we be in it?”

How to read ‘statistically significant’ claims more carefully

When you encounter claims emphasizing statistical significance, ask a few follow-up questions:

- How large was the sample size?

- What was the actual effect size?

- Is the change clinically meaningful?

- How precise is the estimate?

- Has this result been replicated?

A statistically significant result is a starting point–not a conclusion.

Why this matters

Statistical significance was never meant to serve as a stamp of truth. It is a tool for managing uncertainty, not a measure of importance or real-world impact. When p-values are treated as proof rather than context, weak evidence can be framed as decisive and small effects can be exaggerated into bold claims. Understanding what statistical significance does—and does not—tell us is essential for interpreting research responsibly, whether you’re reading a headline, evaluating a health claim, or deciding what evidence to trust.

References

- Statistical Significance, StatPearls

- Hypothesis Testing, P Values, Confidence Intervals, and Significance, StatPearls

- Using the confidence interval confidently, Journal of Thoracic Disease

- Minoxidil and its use in hair disorders: a review, Drug Design, Development and Therapy