Why Guidelines Take Years to Reflect New Research

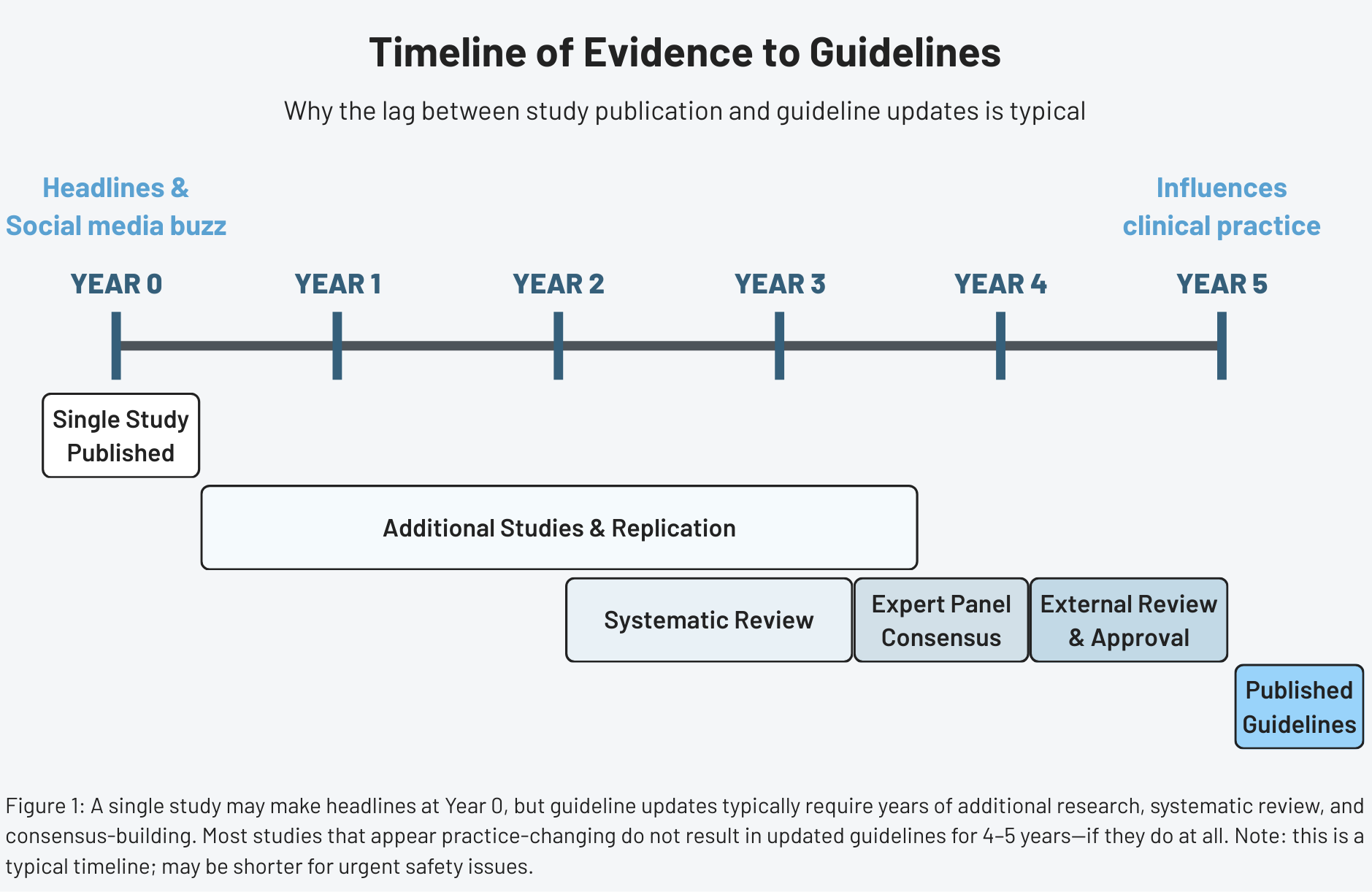

A new study makes headlines, and the guidelines don't change. A closer look at why the lag between research and clinical recommendations is intentional—and what it takes for evidence to actually shift practice.

The disconnect we notice

A new study makes headlines. Within hours, social media lights up with questions: Why hasn’t my doctor changed their advice? Why haven’t the professional guidelines updated yet? If the science just changed, shouldn’t the clinical recommendations change too?

The short answer is: not usually. Understanding why requires a closer look at what guidelines are designed to do—and what they’re not.

What guidelines are

Clinical practice guidelines are recommendations intended to optimize patient care. They’re informed by systematic reviews of evidence and assessments of the benefits and harms of different treatment options.

Guidelines translate research into actionable advice for real-world clinical settings. When evidence is strong, they provide clear direction. When evidence is weaker or conflicting, they acknowledge uncertainty and may present multiple acceptable approaches.

Guidelines are not the same as individual studies. A single study–even a large, well-designed one—answers a specific question under specific conditions. Guidelines must consider whether those findings generalize across diverse patient populations. They also balance competing considerations: efficacy, safety, feasibility, cost, patient preferences, and equity.

Guidelines carry weight. Once published, they influence how millions of patients are treated, how insurance companies make coverage decisions, and how physicians are evaluated for quality of care. This downstream impact creates pressure to get recommendations right, which inherently favors caution.

Why a single study is almost never enough

Even large, rigorous trials have limitations. They enroll select populations that may not represent all patients who would receive treatment. They’re conducted in research settings with close monitoring that doesn’t reflect routine practice. They measure outcomes over fixed time periods that may miss longer-term effects. And they can produce results that seem definitive but later turn out to be outliers.

The history of medicine is filled with landmark trials that initially seemed to change everything but later required revision. A study might show dramatic benefits that diminish when tested in broader populations. Side effects that appeared rare in the controlled settings may prove more common in real-world use. Or subsequent studies may fail to replicate the original findings.

This doesn’t mean individual studies are worthless—they’re essential building blocks of medical knowledge. But guideline developers have learned through experience that waiting for multiple independent confirmations reduces the risk of changing recommendations based on findings that won’t hold up.

If you want a faster way to read health studies without oversimplifying them, subscribers get a free guide: How to Read a Health Study in 10 Minutes.

Evidence moves faster than certainty

New studies are published constantly. In cardiology alone, thousands of research papers appear each year. If guidelines changed every time a new study suggested a different approach, recommendations would be in constant flux. Clinicians couldn’t keep up, and patients would receive unstable, ever-shifting care.

The guideline development process is designed to filter out noise. Not every new finding represents a genuine advance. Some are false positives. Others show real effects that are too small to matter clinically. And others may work in the specific populations studied but fail to generalize.

By waiting for evidence to accumulate and mature, guidelines avoid incorporating preliminary findings that later prove wrong or irrelevant. The trade-off is that when new evidence does represent genuine progress, there’s an inevitable lag before it influences practice. The conservative approach prioritizes reliability over speed.

How systematic reviews slow down change

Guideline development typically begins with systematic reviews. These reviews follow explicit methods to identify studies, assess their quality, and synthesize findings. The goal is to determine what the entire body of evidence shows—not what any single study suggests.

Systematic reviews take time. Searching databases, screening thousands of studies, extracting data, and analyzing results is labor-intensive. By the time a systematic review is completed, months or years may have passed since the newest included studies were published. Research released after the review’s search date won’t be considered until the next update.

Once the systematic review is complete, consensus panels of experts interpret the evidence and draft recommendations. Panel members must reconcile disagreements, balance competing priorities, and determine how strongly to word each recommendation. This process is intentionally thorough, but slow.

Draft guidelines then typically undergo external review, revision, and approval by sponsoring organizations. Each step adds time, but also adds scrutiny and reduces the chance of errors or biases in the final recommendations.

Why non-scientific constraints also slow updates

Even when the science is relatively clear, practical realities can delay change.

Guideline panels are often volunteer groups that meet periodically rather than continuously. Updating a guideline requires funding, administrative support, and coordination among multiple professional societies. These logistical hurdles mean that most guidelines are revised on fixed schedules—every few years—rather than in real time.

There are also legal and regulatory considerations. Once a recommendation is published, it may become embedded in quality metrics, hospital protocols, and insurance policies. Changing those systems is far more complicated than simply rewriting a paragraph in a document.

Finally, abrupt reversals can erode trust. If guidelines swing back and forth too often, clinicians and patients may begin to doubt the credibility of the entire process. Stability has value, even when it means accepting some delay.

When guidelines should change quickly

Despite the case for deliberate caution, some situations warrant rapid guideline updates. Clear safety signals—evidence that a widely used treatment causes serious harm—should trigger immediate action. When multiple large, high-quality trials all point in the same direction, or when a new therapy shows overwhelming benefit for a life-threatening condition, waiting years for a scheduled update isn’t reasonable.

Professional societies recognize this and issue interim statements, focused updates, or rapid communications when the stakes are high. During the COVID-19 pandemic, for example, many organizations updated recommendations in near real time as new data emerged.

But these are exceptions. Most new studies don’t present that level of clarity or urgency.

The danger of chasing every new study

Reacting too quickly to preliminary evidence can cause real harm. If guidelines changed with every promising result, today’s recommended treatment might be tomorrow’s mistake. Patients could be exposed to therapies that later prove ineffective or dangerous. Clinicians would struggle to know which advice to follow. Health systems would waste enormous resources constantly revising protocols.

Science advances through iteration, correction, and occasional reversal. Guidelines exist to protect clinical care from the normal volatility of that process. They are meant to be a stabilizing force, not a reflection of the latest headline.

In that sense, slow updates aren’t a flaw. They’re a feature.

How to read “guidelines vs. new study” headlines more carefully

When you see a news story claiming that a new study “contradicts current guidelines,” ask a few questions:

- Is this based on a single study or a systematic review?

- Have the results been independently replicated?

- Does the study population resemble typical patients?

- Have professional societies issued any formal response or analysis?

Most of the time, a single paper doesn’t overturn decades of accumulated evidence. It adds one data point to a much larger picture. Good science journalism and good clinical practice require patience and context.

Why this matters

Guidelines shape real lives. They influence medications people receive, which tests are ordered, and which treatments are considered standard of care. Because the consequences are so large, the bar for changing guidelines has to be high.

Progress in medicine is rarely linear. It’s a slow, collective process of accumulating evidence, testing assumptions, and gradually refining recommendations. The lag between research and guidelines can be frustrating, but it’s also one of the main safeguards that keeps medical care grounded in reliable knowledge rather than fleeting trends.

References

- Clinical Practice Guidelines We Can Trust, Institute of Medicine

- Clinical practice guidelines: The good, the bad, and the ugly, Injury

- Living Systematic Reviews: An Emerging Opportunity to Narrow the Evidence-Practice Gap, PLoS Medicine

- Evidence to Decision framework provides a structured “roadmap” for making GRADE guidelines recommendations, Journal of Clinical Epidemiology