Why Scientific Advice Changes (And Why That's Normal)

Scientific recommendations keep changing, and it feels like experts can't make up their minds. An exploration of why this happens, what separates genuine revision from flip-flopping, and how to interpret changing guidance more carefully.

The perception of constant change

If you’ve ever felt whiplash from reading health and science news, you’re not alone. Coffee causes cancer, then prevents it. Eggs are good for you, then bad, then good again. Masks don’t work—until they do. Fat is the enemy. But wait, sugar is worse. Actually, it's ultra-processed food. Maybe.

To many people, this pattern feels like incompetence at best and dishonesty at worst. If scientists can’t make up their minds, why should we trust what they say?

Most of what looks like flip-flopping is actually science working as intended. Understanding why scientific advice changes can help separate genuine progress from confusion and misinformation—and help maintain appropriate trust in scientific guidance.

Why it feels like scientific opinion is always changing

Scientific advice usually reaches the public through headlines, guidelines, or social media summaries. These formats compress complex and uncertain processes into simple statements that sound definitive.

"New study overturns everything we knew."

"Experts now say the opposite."

What’s usually missing is context. Scientific knowledge doesn't advance in clean, linear steps. It grows unevenly, is often messy, and sometimes contradicts earlier conclusions.

Two things amplify the sense of instability.

First, early findings tend to be overrepresented in the media. A single study—especially one with a notable result—is far more newsworthy than a null result or a slow accumulation of evidence. Early studies are also more likely to be wrong or exaggerated.

Second, advice is often presented as binary when in reality, the underlying evidence is probabilistic. Recommendations are framed as ‘do’ or ‘don’t’ even when the actual conclusion is closer to: based on the current evidence, this is slightly more likely to help than harm for most people.

When that probabilistic conclusion shifts with new data, it can feel like reversal. In reality, it’s adjustment.

What scientific advice actually is

Scientific advice is a synthesis of available evidence at a given moment, filtered through expert judgment about what that evidence means for real-world decisions. The process involves weighing incomplete information, acknowledging uncertainty, and making recommendations despite gaps in knowledge.

Importantly, advice is downstream from science. It’s shaped not only by data, but by how that data is interpreted and applied in real-world settings. Public health recommendations, for example, must consider population-level effects, not individual optimization. A guideline might be appropriate for most people even if it doesn’t apply equally to everyone.

Advice is also provisional—it’s meant to change as evidence improves. Problems arise when provisional guidance is communicated as settled fact.

If you want a faster way to read health studies without oversimplifying them, subscribers get a free guide: How to Read a Health Study in 10 Minutes.

Why evidence accumulates unevenly

Not all evidence is gathered at the same pace or with the same reliability.

Early in the study of a topic, evidence often comes from observational studies, animal models, or small trials. These studies are useful for generating hypotheses, but they’re limited in what they can establish. Confounding variables, measurement issues, and publication bias all loom.

As interest grows, larger and more rigorous studies follow. Randomized trials, better controls, longer follow-up, and replication begin to clarify which effects are real, which are exaggerated, and which disappear entirely.

This uneven accumulation creates a predictable pattern. Early studies suggest a strong effect, which draws media attention and leads to preliminary advice. Later studies then weaken, refine, or reverse the effect, and guidance changes accordingly.

The initial advice wasn’t necessarily wrong—it was based on what was known at the time.

This pattern is especially common in nutrition. Studying how specific foods affect health requires following people for years or decades, since diet-related diseases develop slowly. Early evidence often comes from observational studies that track what people eat and correlate it with health outcomes. These studies can identify associations, but they struggle to establish causation.

For example, if people who eat fish have better health outcomes, is that because fish improves health, or because health-conscious people are more likely to eat fish in the first place?

As researchers conduct more rigorous randomized trials and higher-quality evidence accumulates, earlier findings may be confirmed, refined, or overturned. This isn’t a failure of initial research—it’s the scientific process working as intended.

Real revision vs. flip-flopping

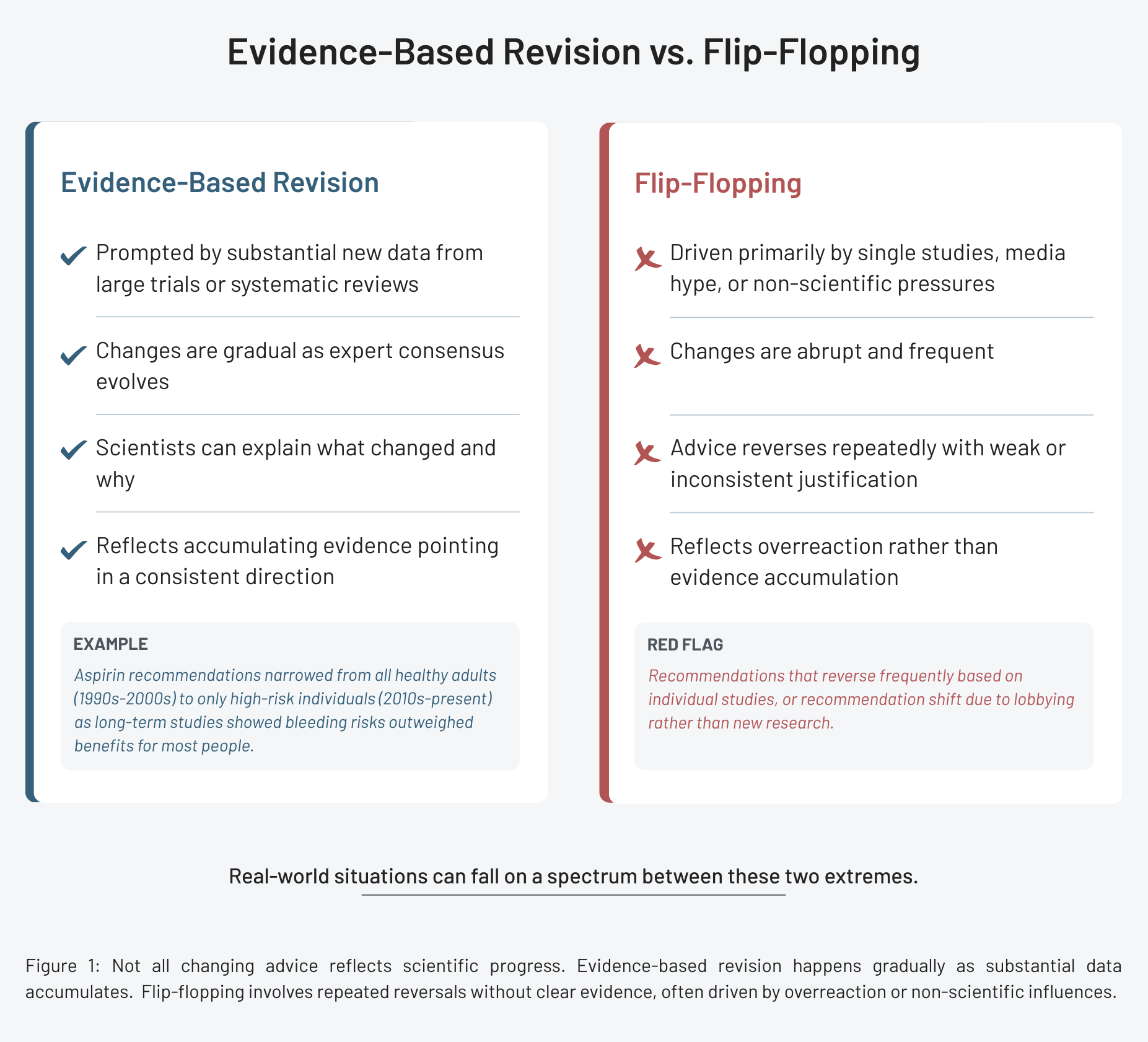

Not all changing advice reflects scientific progress. Sometimes recommendations shift for reasons unrelated to evidence: political pressure, lobbying, or individual scientists over-interpreting limited data.

Real evidence-based revision tends to share certain characteristics. First, it’s usually prompted by substantial new data—large trials, systematic reviews, or multiple independent studies pointing in the same direction. Second, the revision is typically gradual rather than abrupt, as expert consensus evolves with accumulating evidence. Importantly, scientists can usually articulate what changed and why, pointing to specific studies or findings that motivated the update.

True flip-flopping looks different. It involves advice that keeps reversing without clear evidence justifying each reversal. It may reflect overreaction to single studies, media hype, or non-scientific influences such as financial conflicts or political incentives.

The change in aspirin recommendations illustrates evidence-based revision. For years, low-dose aspirin was widely recommended to prevent heart attacks in healthy adults. As larger, longer-term studies accumulated, evidence showed that bleeding risks outweighed benefits for most people without existing cardiovascular disease. Recommendations narrowed to focus on those at highest risk. This wasn’t flip-flopping, it was refinement as better evidence emerged.

What changed advice reveals about science

When handled well, changing advice reveals something important: science is a process, not a product. It shows researchers updating conclusions in response to new evidence rather than clinging to outdated beliefs. Rigid advice unchanged in the face of contradictory evidence would be far more concerning than thoughtful revision.

Revising recommendations requires intellectual honesty and humility. Scientists must acknowledge when earlier advice was based on limited evidence or when new data exposes gaps in understanding. This is harder than it sounds. Researchers invest years in particular hypotheses and may face professional or reputational costs when findings don’t hold up. The scientific system relies on peer review, replication, and independent sources of evidence to correct individual bias over time.

Changing advice also reflects the complexity of many health questions. There are rarely simple, universal answers. The optimal intervention may differ by age, genetics, health status, or baseline risk. As research uncovers these nuances, recommendations often become more targeted–and sometimes more conditional.

This can be uncomfortable for audiences accustomed to certainty. But science improves as it matures.

Reading changing advice more carefully

When advice shifts, slow down and ask a few questions:

- What kind of evidence drove the change? A large trial, meta-analysis, or reinterpretation of existing data? Be cautious if it's based on a single study.

- What outcome is being discussed? Did advice change because researchers started measuring outcomes that matter more?

- Who does this apply to? Population-level guidance may change even if individual risk remains similar.

- How confident is the recommendation? Good guidance often includes uncertainty. If certainty suddenly appears, or disappears, that’s a sign to look closer.

Why this matters

How we interpret changing scientific evidence affects trust—not just in science, but in institutions, medicine, and public health. When revisions are framed as failures, people become cynical. When uncertainty is hidden, later corrections feel like betrayal. But when the process is explained honestly, change becomes easier to accept.

For individuals, understanding this dynamic can prevent overreaction. It helps avoid chasing every new claim or dismissing all guidance as unreliable. For society, the stakes are even higher. Decisions about health, environment, and technology depend on the ability to update beliefs in light of new evidence. Treating change as weakness discourages learning.

The next time you see a headline about scientists reversing their position, resist the urge to dismiss it as flip-flopping. Instead, look for what new evidence emerged, whether expert consensus supports the change, and how the revision fits into the broader trajectory of evidence.

References

- Communicating scientific uncertainty, PNAS

- Foods, Nutrients, and Health: As Modern Nutrition Science Evolves, When Will Our Policies Catch Up? Lancet Diabetes & Endocrinology

- Aspirin Use to Prevent Cardiovascular Disease, JAMA

- Use (and abuse) of expert elicitation in support of decision making for public policy, PNAS